frontend: App program -> emit streaming data to backend backend: kafka -> storm / spark / dataflow -> data store -> reuseInefficiency and disadvantages arising from the structure of the frontend and the backend are complex and slow (eg, distributed queuing, distributed streaming processing, etc.) and difficulty in constructing the server-side system. In particular, the task topology management problems of DAG-style or minibatch-style job are difficult. These are the Storm, Spark, which is now going to legacy.

So how does this change in Serverless?

App program: pipeline of lightweight functionsTo achieve this, runtime provisioning of lightweight function services is required, and that is Serverless Computing.

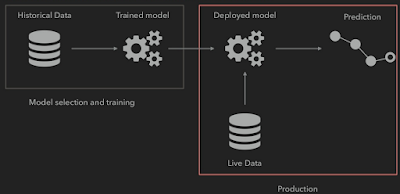

As another example, imagine the prediction model of Google Flights introduced in the previous post. The traditional approach would be to implement the prediction service by deploying the learned model with the existing dataset:

Machine Learning can also be implemented with the Serverless Computing Architecture in the fashionable DevOps style. The following is the Serverless Architecture of the event-driven prediction algorithm:

The advantage of this is that the complexity of the implementation and system is dramatically reduced, and it is possible to design the system with a decentralized architecture.

No comments:

Post a Comment